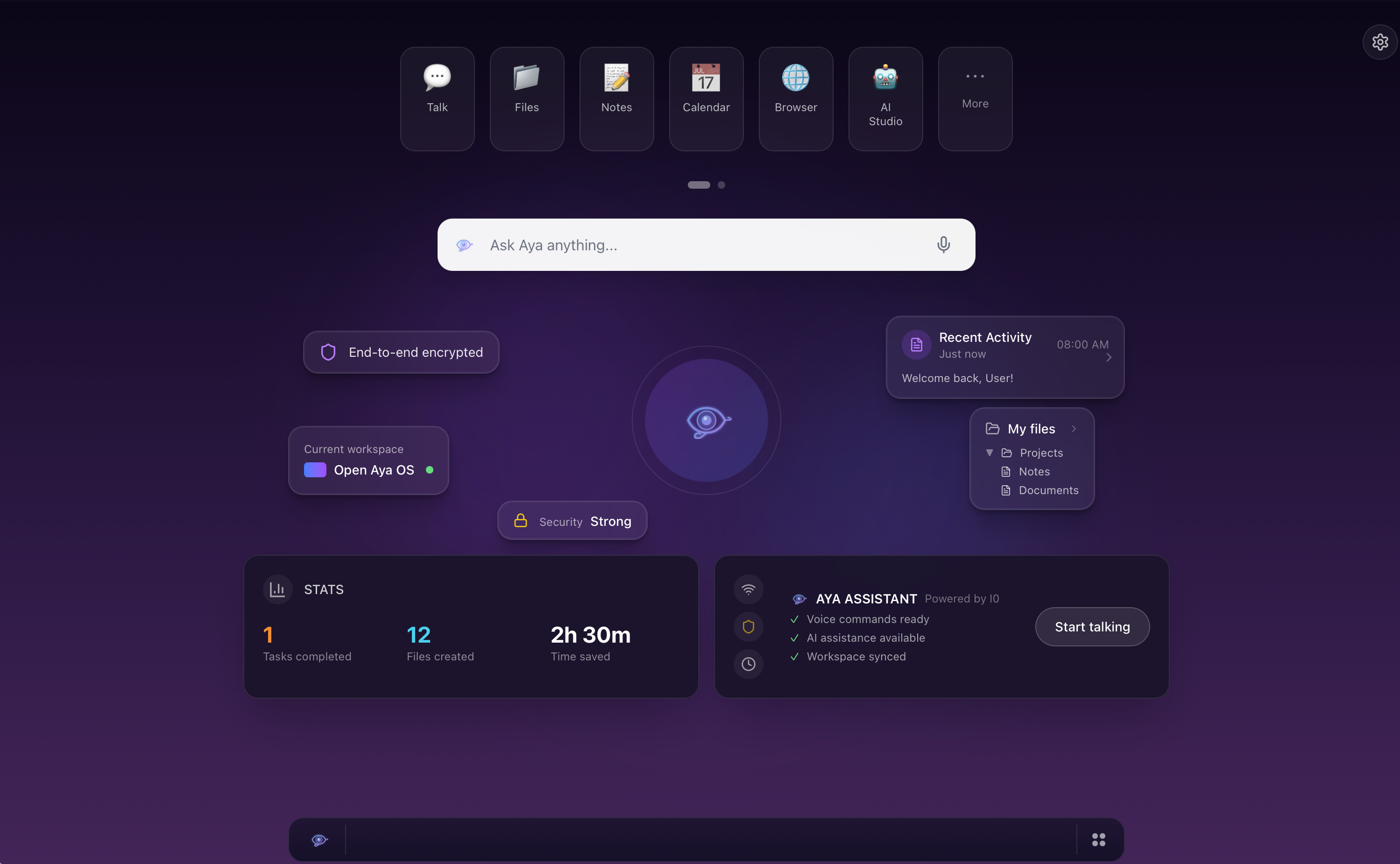

Your apps, your AI, your space -- in any browser, on any device.

The Shift

For decades, your most-used interface -- the home screen -- has been locked to hardware.

Your phone.

Your laptop.

Your tablet.

Different storage. Different apps. Different assistants. When you switch devices, you start over.

Open Aya OS removes the device as the center of gravity.

Your workspace lives in the cloud.

Your AI remembers you.

Your environment follows you anywhere a browser opens.

What It Is

Open Aya OS is a browser-native operating system.

No install.

No app store.

No device lock-in.

Open a URL and your full workspace appears:

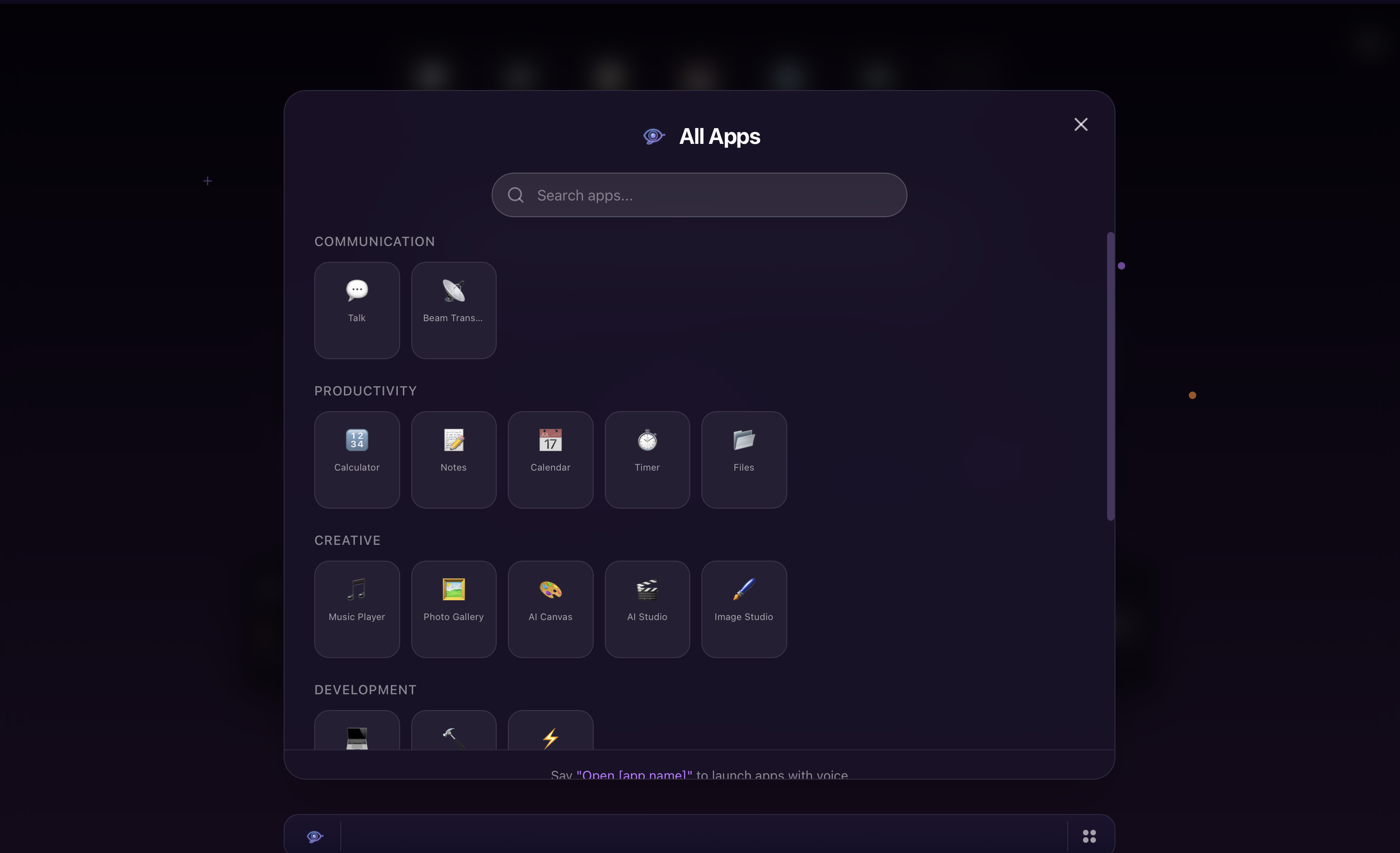

- ·37 integrated applications

- ·A voice-native AI assistant with structured cognition

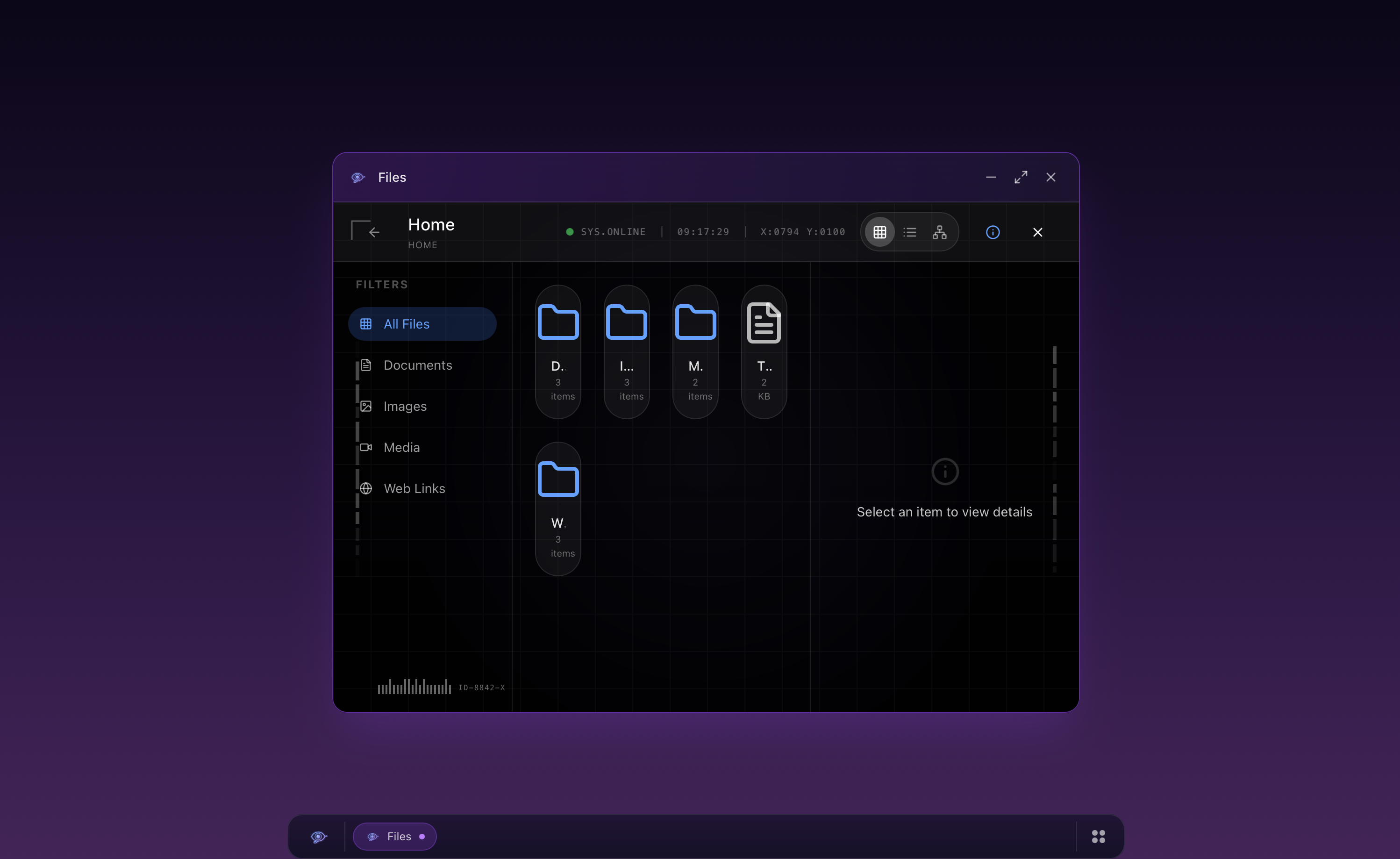

- ·Infinite cloud-backed storage

- ·A real OS shell (windows, taskbar, Spotlight, CLI)

It behaves like an operating system. It runs like a website.

Screenshots

What Makes It Different

Aya Is a Cognitive System, Not a Chatbox

Most assistants are stateless wrappers around a model. Aya runs through a structured pipeline: Cognitive Spine (8-step reasoning), Context Layers (persistent user model + daily state), Strategy Auction (6 agents bid on every task), Behavioral Learning (16 continuous RL parameters), Memory System (episodic, semantic, procedural). She doesn't just respond. She routes, evaluates, adapts, and remembers. This is architecture -- not prompt stacking.

Voice-Native by Design

120+ voice commands are embedded at the OS level. Not "voice added later." Voice is a first-class input. Custom annunciation preprocessing prevents robotic clipping and mispronunciation. All speech flows through a centralized engine.

Infinite Space

Open Aya OS replaces device storage limits with cloud persistence. No "storage full." No syncing conflicts. No app silos. Your workspace expands with you. Mobile feels like a native app grid. Desktop feels like a real OS.

One Platform, 37 Apps

Productivity. Learning. Creative. Developer. System. All built in the same shell. All searchable via Spotlight (Cmd+K). All voice-accessible. All designed to share context with Aya. Not 37 disconnected apps -- one unified environment.

Inspectable Intelligence

Type /inspect -- see what Aya is thinking. /audit -- see which agent won the Strategy Auction. /status -- see what signals influenced behavior. No black box.

By the Numbers

What’s Real Now

- ·37 apps live in an OS shell

- ·120+ voice commands integrated

- ·Intelligence pipeline built and running

- ·Local-first persistence works today

What Ships Next

- ·Replace mock Supabase with production client

- ·Auth + cross-device persistence

- ·Unified global library + search across docs/apps

Intelligence Pipeline (per message)

Every message -- typed or spoken -- passes through this pipeline before the AI model generates a response.

37 Applications

All built in the same shell. All searchable via Spotlight (Cmd+K). All voice-accessible. All designed to share context with Aya.

Productivity

8 appsTalk, Notes, Word Processor, Calendar, Calculator, Code Lab, Timer, Work OS

Learning

8 appsStudy Helper, FokusRead, Learning Paths, AI Curriculum, Study OS, Knowledge Map, Knowledge Forge, Typing Game

Creative

6 appsAI Canvas, AI Studio, Image Studio, Media Hub, Music Player, Beam Transfer

System & Developer

9 appsSpatial Files, Settings, Admin Dashboard, Developer Hub, Web Browser, Aya Workspace, Aya Memory Viewer, Aya Dashboard, Workflow Composer

Voice + CLI

120+ Voice Commands

"Open notes." "Search for machine learning." "Set a timer for 25 minutes." The entire OS responds to voice. Custom annunciation engine prevents the robotic clipping typical of browser TTS.

14 CLI Commands

/remember, /forget, /teach, /mode, /depth, /status, /inspect, /audit, /export, /persona, /health, /reset, /help. Tab completion. Power users control Aya's behavior directly.

Stack

Where It Fits in Serpens

OpenAya is the cognitive interface platform of the Serpens stack. It informs UI patterns across all Serpens products, drives voice and ambient computing research, and provides the workspace layer that other platforms (SerpenSky, Global Health IQ) connect to.

Origin

OpenAya began with a vision for reimagining how we interact with our workspaces:

The Future Work Space -- As Convention Would Have It

by Richie Adomako

Open Aya OS is live in private preview.

The differentiated core -- intelligence + environment -- is built. What ships next is production persistence and auth. The schema exists. RLS exists. Tables exist. This is plumbing -- not invention.